KMP (Knuth–Morris–Pratt) String Matching Algorithm

KMP (Knuth–Morris–Pratt) is a string matching algorithm well suited for finding a single match. For multiple matches, an improved Rabin-Karp is better.

Note: If you are preparing for an exam, do not read this article because it follows the original paper, which differs significantly from typical exam material.

This article is structured as follows (see sidebar for table of contents):

- Why KMP is often so difficult to understand in many resources, differences and caveats;

- Brief introduction and characteristics of KMP;

- How KMP works;

- The logic behind KMP;

- How the

nextarray is computed; - Why the

nextarray yields the appropriate shift; - Complete code.

The reason for introducing how it works before the logic is to let you see KMP in action first, making the logic easier to understand. Do not force through the "how it works" section – I know many blog examples are poor and incomplete. (The example from the original paper covers the three necessary cases very well, although it is a bit long.)

I also provide many examples in later sections that can help with memorization. After reading them, you can revisit the original paper's example and understand it instantly, saving time.

Some Rants

KMP is a culmination of work by three formidable individuals:

- Donald Knuth: Author of TAOCP and TeX, Turing Award winner in 1974.

- James H. Morris: Professor Emeritus of Computer Science at Carnegie Mellon University.

- Vaughan Pratt: Student of Knuth, completed his Stanford PhD in 20 months, became Professor Emeritus at Stanford in 2000.

This background (especially Knuth) makes the algorithm's underlying principles not so easy to grasp. I spent a day understanding what KMP is and how it relates to the various descriptions in textbooks and papers. This made the learning process somewhat amusing.

When studying KMP, I found that domestic textbooks often present it as an improvement over the naive algorithm, introducing the next array (partial match table), which records the length of the longest proper prefix that is also a suffix for each position in the substring. I don't know if you understood that explanation; I certainly didn't.

Then I found that Introduction to Algorithms approaches it as an improvement over finite automata, mentioning prefix computation with heavy mathematical rigor. I got some insight but had no clue how to write the code.

Neither explanation satisfied me.

Online blogs and code either follow the domestic textbook explanation or the Introduction to Algorithms one, or no explanation at all, yielding the same result.

Finally, I read the original KMP paper and understood why both domestic textbooks and Introduction to Algorithms are unclear: the algorithm is too clever to be explained simply. Also, although Knuth is now a giant, this paper was written early, with immature writing style and no fixed format, resulting in a somewhat loose structure.

Then the funniest part: different resources compute the KMP next array differently, so I've never seen an exam that asks to compute it because it's not unique. How non-unique is it?

- Whether indices start at 0 or 1.

- Whether the

nextarray indices start at 0 or 1. - Whether

next[0]is 0 or 1.

Do the permutations: theoretically there are 8 possible next arrays for a pattern (in reality fewer because character and array indices correspond, so about 3–4 possibilities). Each corresponds to a different computation method, but the results are equivalent. So if you have an exam, stick to the method in your textbook; don't study the algorithm itself like I did.

This article uses the original paper's method for computing the next array: strings and next indices start at 1. How ever, the code section follows C conventions: strings and next indices start at 0.

What is KMP?

String matching algorithms generally have two phases: preprocessing and matching. Preprocessing performs some work on the pattern to speed up subsequent matching.

When matching, both the naive algorithm and finite automaton may encounter mismatches and need to "backtrack" – re-examining the same part multiple times.

"Backtracking" here refers to moving backward in the matching string, not in the text.

For example, matching BCD in ABCBCD fails upon reaching the second B:

- Naive: resume comparison from the first

C(since the previousBdidn't match), and the pattern starts again from the first elementB. - Finite automaton: although it stays at the current

B, the next comparison is stillC, which involves a backtrack and ends up in the same place.

The former always restarts from the beginning of the pattern, while the latter sometimes does not, making it faster.

| Algorithm | Preprocessing Time | Matching Time |

|---|---|---|

| Naive | O(0) | O((n-m+1)m) |

| Finite Automaton | Θ(m | Σ |

But as seen in the previous article, the finite automaton preprocessing is complex: it must not only determine states that transition to the next state but also set backtracking points. Can we avoid backtracking altogether? That is, once a character in the text is examined, it is never examined again.

KMP achieves this by reducing the need to backtrack: instead of moving the pattern pointer backward in the pattern, it shifts the entire pattern to the right, never re-examining characters.

Thus, we only need a one-dimensional array (instead of a 2D array), whose length equals the pattern length (instead of pattern length × alphabet size), leading to significant improvements in both time and space.

Time improves because preprocessing is simpler.

| Algorithm | Preprocessing Time | Matching Time |

|---|---|---|

| Naive | O(0) | O((n-m+1)m) |

| Rabin-Karp | Θ(m) | O((n-m+1)m) |

| Finite Automaton | Θ(m | Σ |

| KMP | Θ(m) | Θ(n) |

However, as with other string matching algorithms, preprocessing is the most important and complex part of KMP. Shifting right is simple, but calculating the shift amount is the key.

How KMP Works

Let's explain how KMP works using the example from the original paper.

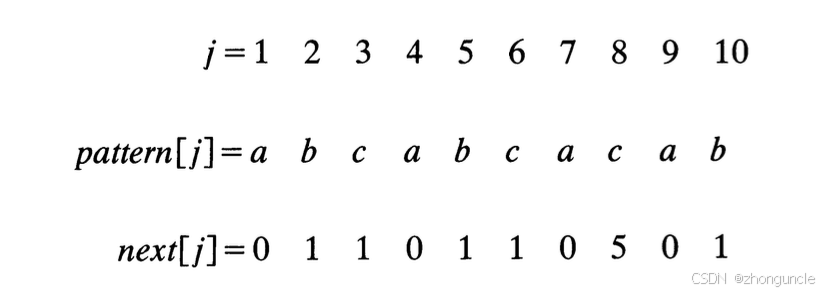

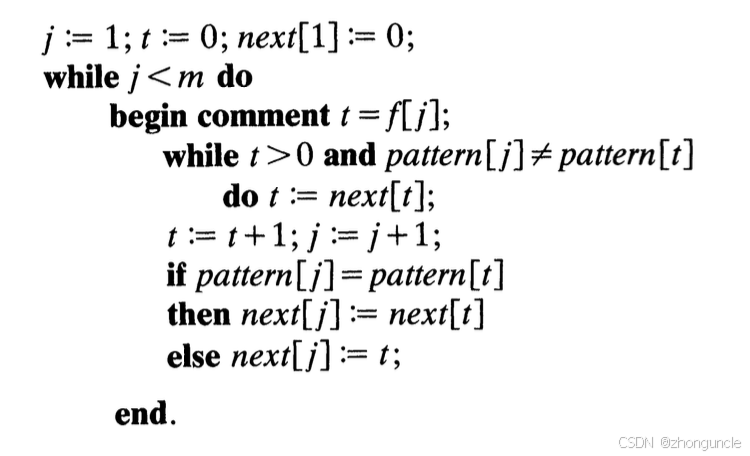

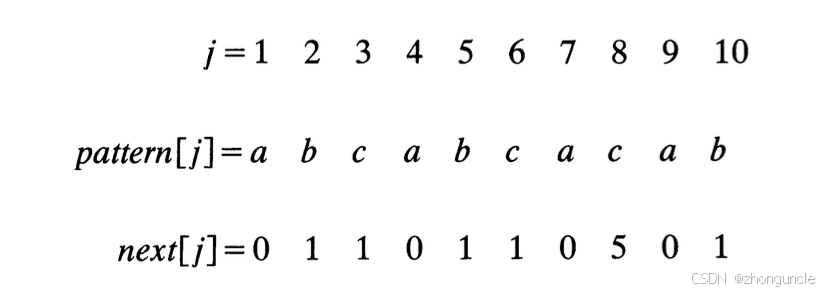

The following figure shows the pattern and its corresponding next array elements:

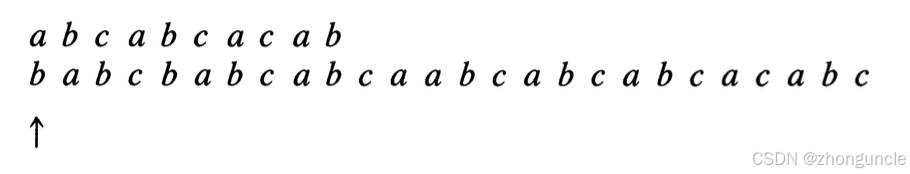

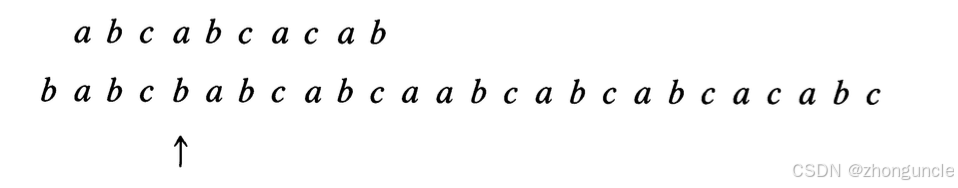

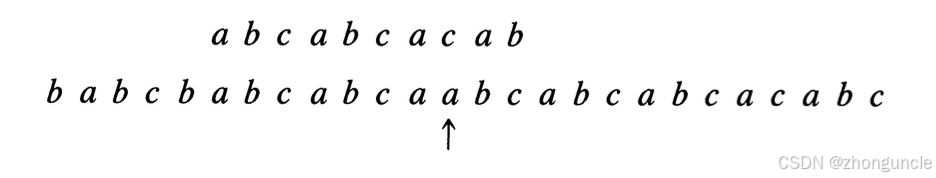

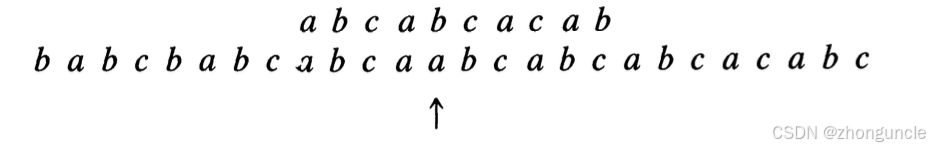

In the figures below, the top line is the pattern, the middle line is the text, and the bottom line indicates the characters to be examined:

The first character mismatches, so the pattern shifts right by 1 - next[1] = 1 position. Since next[1]=0, the comparison restarts from the beginning. Then mismatch occurs at the 4th character:

Now next[4]=0, so shift right by 4 - 0 = 4, restart from the beginning. Then mismatch at the 8th character:

Now next[8]=5, shift right by 8-5=3, and compare starting from the 5th character.

If you wonder why only 3 positions are shifted, the section "Why

j - next[j]is the shift amount" will explain.

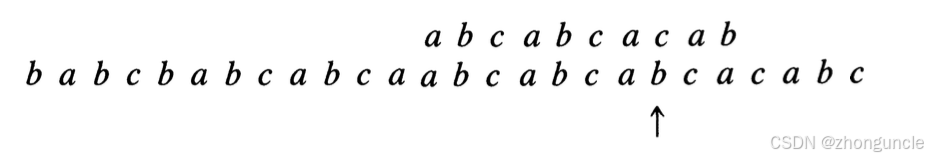

This time mismatch at the 5th character:

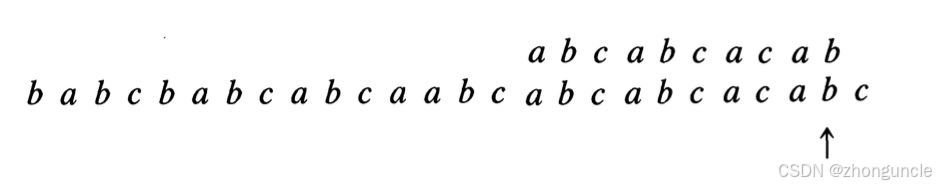

next[5]=1, shift right by 5-1=4, restart from the beginning. Mismatch again at the 8th character:

Shift right by 3, compare from the 5th. Now all match:

The Logic Behind KMP

KMP uses the same algorithm for preprocessing and matching, as noted in both Introduction to Algorithms and the original paper.

The difference:

- Preprocessing matches the pattern against itself;

- Matching matches the pattern against another string.

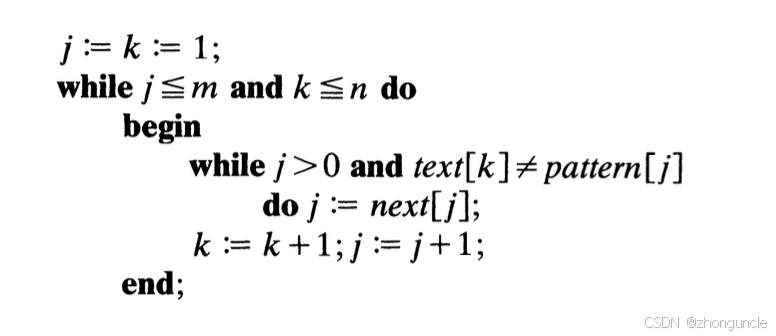

The algorithm's structure:

Place pattern at the left end

while pattern not fully matched and text not exhausted

while pattern character != current text character

shift pattern to the right by appropriate amount

advance to next text character

Code form:

What does "shift pattern to the right by appropriate amount" mean? It uses the equation:

k = p + j

k: index in the text;j: index in the pattern;p: a value that makes the equation hold (essentially the offset, can be rearranged top = k - j).

Usually, we fix k and p to match the string, because knowing two determines the third.

This equation is the core idea of KMP. The correctness proof and the computation of next rely on it. In the section "Why index - next[index] is the shift amount", I will explain why this is the core idea.

Proving correctness means the algorithm can be mathematically justified, ensuring it is valid and rigorous. Without proof, even extensive testing might miss errors.

How the next Array is Derived

From the process above, the key to KMP is the next array. How is it computed?

Let's see how the example in the original paper computes next.

In the original paper, they introduce something called f[x]. Recall that preprocessing and matching use the same algorithm but with different objects; the j in the following demonstration corresponds to that.

f[x] is for the text and pattern, but when computing next, both are the pattern. Don't overcomplicate by following the paper's notation; in the code below, f[x] is just a comment.

The paper's description of the next computation has the following structure:

That's hard to understand. So let's use the paper's example step by step:

Initial state:

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> first element is conventionally 0 or -1; here we set next[1]=0

(p=text index; k=p+j; j=pattern index)

First match (p=1, k=2, j=1):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> text[p+j] != pattern[j], next[2]=j=1

Second match (p=2, k=3, j=1):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> text[k] != pattern[j], next[3]=j=1

Third match (p=3, k=4, j=1):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[4]=next[j]=next[1]=0

(When equal, only advance the check pointer; do not move pattern, i.e., p unchanged)

Fourth match (p=3, k=5, j=2):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[5]=next[j]=next[2]=1

Fifth match (p=3, k=6, j=3):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[6]=next[j]=next[3]=1

Sixth match (p=3, k=7, j=4):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[7]=next[j]=next[4]=next[1]=0

Seventh match (p=3, k=8, j=5):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> not equal, next[8]=j=5

Eighth match (p=8, k=9, j=1):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[9]=next[j]=next[1]=0

Ninth match (p=8, k=10, j=2):

|

text: a b c a b c a c a b

pattern: a b c a b c a c a b -> equal, next[10]=next[j]=next[2]=1

This matches the result from the paper:

So:

- If characters match, only advance the check pointer (p unchanged), and set

next[k] = next[j]. - If they mismatch or pattern is exhausted, set

next[k] = next[j]as well.

However, if you compute next using some textbook methods, you'll get different values.

For example, using prefix-suffix sets:

a: prefix and suffix are empty → 0.ab: prefix {a}, suffix {b} → intersection empty → 0.abc: prefixes {a,ab}, suffixes {c,bc} → empty → 0.abca: prefixes {a,ab,abc}, suffixes {a,ca,bca} → intersection {a} → 1.- ...

From the start, you can see differences. Yet the shift distances and final results are equivalent. However, if you use this method's next array, ensure indices start at 0 (i.e., the j in the figure), otherwise errors occur.

Some textbooks suggest computing shift distances based only on matched parts. I find that unnecessary; handling indices properly is easier and faster.

nextval

Another reason I recommend the original paper's method: some textbooks mention another array nextval as an improvement, but nextval actually corresponds to the original paper's method.

Why index - next[index] is the Shift Amount

Mathematically proving KMP's correctness is complex; the paper does it. Here I'll only give an intuitive explanation for points that might interest readers.

The

nextused here is computed according to the paper's method; if you have a different one, convert accordingly.

From the previous section, you see that next is the current pattern index. When matching, we operate on the text index. If we know the pattern index (i.e., next), then according to the equation k = p + j, we can compute the shift.

So the question becomes: Why is the shift for self-matching equal to the shift for pattern matching?

First, an example to show that they are indeed equal: text = ABABBABC, pattern = ABABC. Compute next:

Initial state:

text: A B C -> next[1]=0

pattern: A B C

First match (p=1, k=2, j=1):

|

text: A B C

pattern: A B C -> mismatch, next[2]=j=1

Second match (p=2, k=3, j=1):

|

text: A B C

pattern: A B C -> mismatch, next[3]=j=1

Result:

| Index | 1 | 2 | 3 |

|---|---|---|---|

| Char | A | B | C |

| next | 0 | 1 | 1 |

Now suppose we don't know the text, only the pattern.

text: A B A B B A B C

pattern: A B C

KMP matches until the third character A mismatches. We know the first three text characters are ABX (X≠C, otherwise match). Since X≠C, it could be A (the first character of pattern), so we can start from the same position next time.

Understand that we don't need to remember the initial

AB; because we reached the mismatch, the prefixABwas matched.

Now using next: 3 - next[3] = 3 - 1 = 2, shift right by 2. Result:

text: A B A B B A B C

pattern: A B C

Same as above. Continue: now we know the first 5 text characters are ABABX (X≠C). Shift pattern sothat it starts at X:

text: A B A B B A B C

pattern: A B C

Mismatch at first character; we know first 6 text characters are ABABBX (X≠A). Since no match possible starting from X, skip this character:

text: A B A B B A B C

pattern: A B C

Now match succeeds. Return index 6 (0-based: 5).

Now why is the self-matching shift equal to pattern matching shift?

Borrow the concept of "reference frame" from physics. This is not my idea; the original paper mentions "relative" concepts.

The pattern has internal "movement", and the pattern as a whole also "moves" over the text. "Movement" here means shifting right.

Since shifting right is one-dimensional and direction is fixed, we have a simple transformation:

Absolute coordinate = relative origin + relative coordinate

Here "relative origin" is the position in the pattern used for comparison, and "relative coordinate" is the self-matching offset.

Absolute offset = relative origin + relative offset

This is exactly the earlier equation:

k = p + j

With this relation, whenever we need to shift, we can find the previously matched part before the compared character and move accordingly:

Relative origin = Absolute offset - Relative offset

Where:

relative originis the movement distance of the origin (if starting from 0, it is the shift amount of the text index), i.e., the appropriate shift position;Absolute offsetis the text index;Relative offsetis the pattern index.

Converting:

p = k - j

This distance depends only on the pattern, not on the text.

Honestly, when I understood this, I was amazed at the brilliance of this algorithm conceived fifty years ago.

If you still don't understand, see the section "Building the State Transition Table" in the article about finite automata. The idea is similar; the difference is that the finite automaton includes the current input character when searching for repetition, while KMP does not.

Complete Code

As mentioned, preprocessing and matching use the same algorithm, with similar code structure. The following code is based on the original paper but with indices adjusted to start from 0 (C style).

#include <stdio.h>

void getNext(char pattern[], int m, int next[]) {

next[0] = -1;

int j = 0, t = -1;

while (j < m) {

while (t >= 0 && pattern[j] != pattern[t]) {

t = next[t];

}

j++; t++;

if (pattern[t] == pattern[j]) {

next[j] = next[t];

} else {

next[j] = t;

}

}

}

int kmp(char text[], char pattern[], int n, int m, int next[]) {

int j = 0, k = 0;

while (j < m && k < n) {

while (j >= 0 && text[k] != pattern[j]) {

j = next[j];

}

k++; j++;

}

if (j == m) {

return k - j;

}

return -1;

}

int main() {

char text[] = "babcbabcabcaabcabcabcabcacabc";

char pattern[] = "abcabcacab";

int n = sizeof(text) / sizeof(char) - 1; // exclude null terminator

int m = sizeof(pattern) / sizeof(char) - 1;

int next[m];

for (int i = 0; i < m; i++) next[i] = 0;

getNext(pattern, m, next);

int index = kmp(text, pattern, n, m, next);

printf("Match at index %d\n", index);

return 0;

}

Output:

Match at index 18

Hope this helps those in need.

First article: "String Matching Algorithms 1/3: Naive and Rabin-Karp" - ZhongUncle's CSDN

References

Introduction to Algorithms, Chapter 32.

Knuth, Donald; Morris, James H.; Pratt, Vaughan (1977). "Fast pattern matching in strings". SIAM Journal on Computing. (Original KMP paper.)