Let GPT-4 Fix Golang Data Race Issues (Continued)

1. What I Thought

I thought the GoPool project would be a flash in the pan, quietly gathering dust on GitHub.

The story of the GoPool project's birth: "In Just Three Days, I Used GPT-4 to Generate the Top-Performing Golang Worker Pool, Easily Beating the GitHub 10k-Star Project"

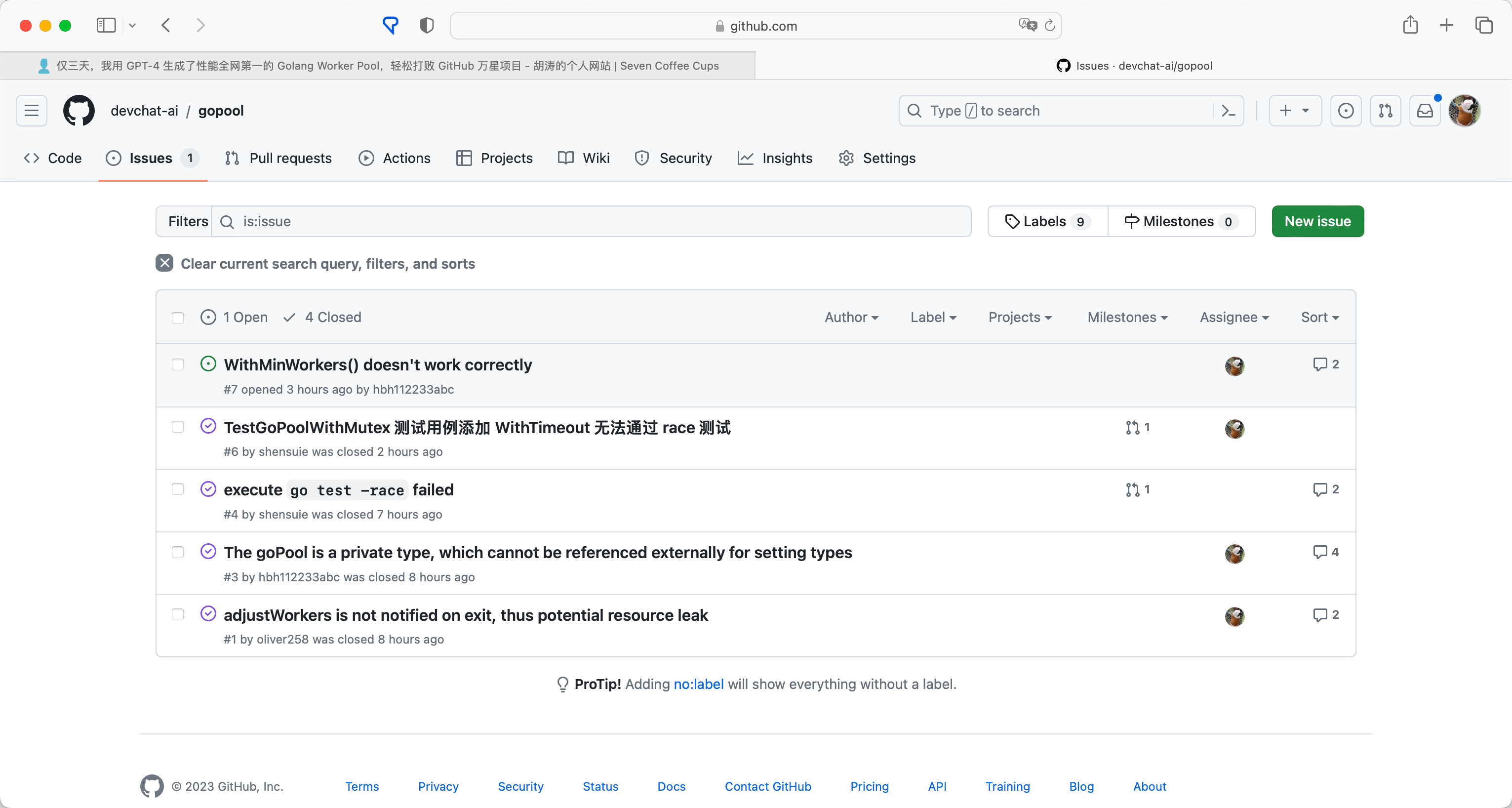

However, in the past couple of days, more and more people have started trying GoPool and have discovered some bugs, which they reported on GitHub.

So today, I'll continue sharing the process of using GPT-4 to resolve issues. Whether you're interested in Golang or GPT-4, the following content will be right up your alley.

2. The Persistent Data Race Issue

I had just published an article related to data races:

- "Let GPT-4 Fix Golang Data Race Issues"

In that article, I introduced how to use GPT-4 to solve a data race problem in a unit test, and then submitted a PR:

- Refactor TestGoPoolWithRetry to use atomic operations

Before I could even take a sip of water, another user filed a new issue:

- TestGoPoolWithMutex test case fails race test when adding WithTimeout

Well, it looks like I can't leave work early today.

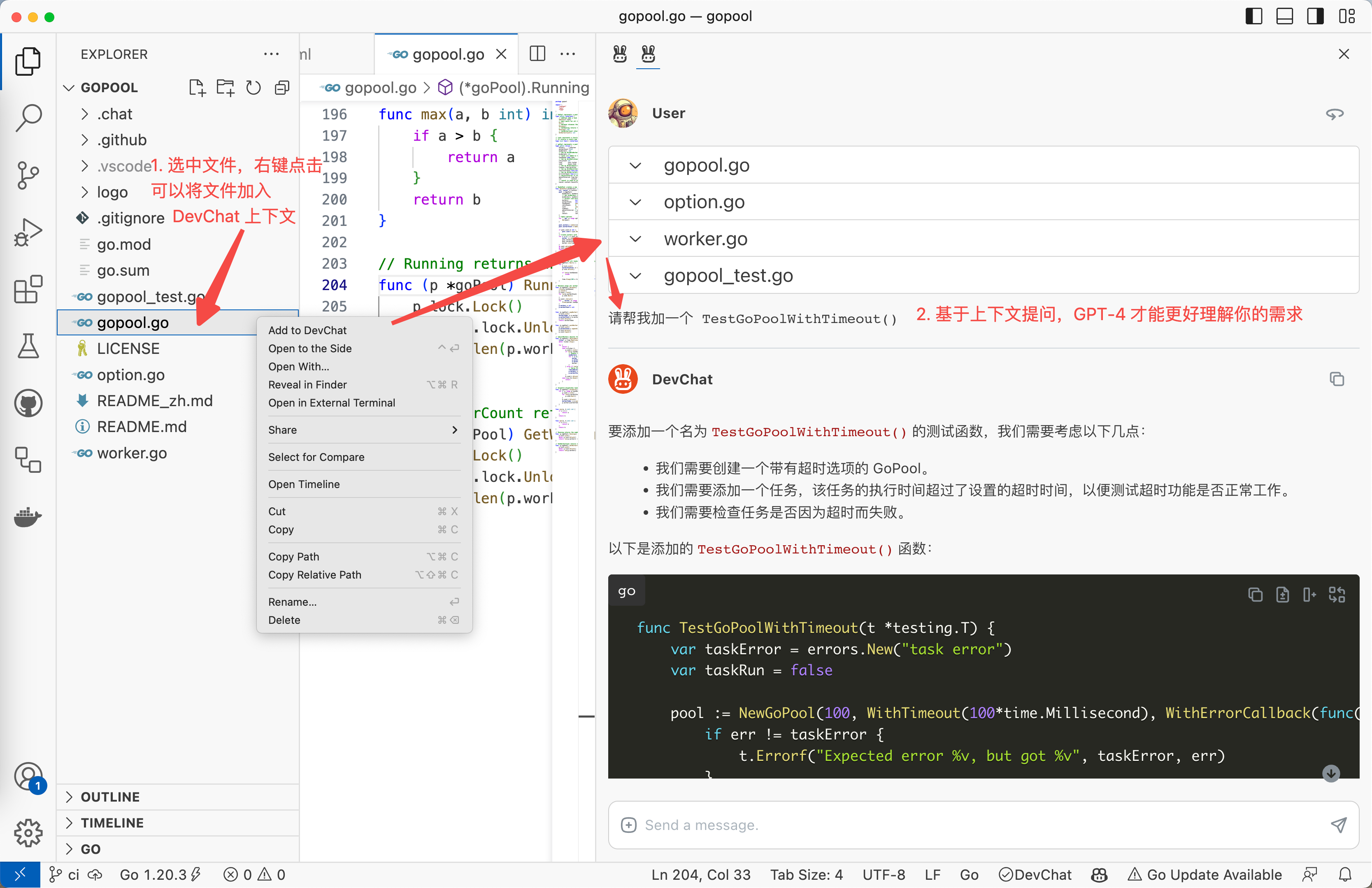

3. Standard Procedure: Unleash GPT-4

The following interaction was done using DevChat with GPT-4. If you're interested in DevChat, feel free to checkout this article:

- "DevChat: Seamlessly Integrate GPT-4 into VS Code for the Ultimate Programming Experience"

- If you want to sign up for DevChat, click this link for free registration. DevChat is still in public beta and is free.

3.1 Reproducing the Issue

The user mentioned in the issue that they added WithTimeout(10*time.Microsecond) to the TestGoPoolWithMutex() test case and encountered a data race problem. The code is as follows:

func TestGoPoolWithMutex(t *testing.T) {

pool := NewGoPool(100, WithLock(new(sync.Mutex)), WithTimeout(10*time.Microsecond))

defer pool.Release()

for i := 0; i < 1000; i++ {

pool.AddTask(func() (interface{}, error) {

time.Sleep(10 * time.Millisecond)

return nil, nil

})

}

pool.Wait()

}

I was able to reproduce this issue consistently on my local machine. So, the next step was to simplify the scenario by creating a separate unit test to verify the WithTimeout(10*time.Microsecond) feature.

3.2 Let GPT-4 Write a Unit Test

- I said to DevChat:

DevChat's full response:

To add a test function called TestGoPoolWithTimeout(), we need to consider the following points:

- We need to create a GoPool with a timeout option.

- We need to add a task that takes longer to execute than the set timeout, so we can test if the timeout functionality works correctly.

- We need to check if the task fails due to timeout.

Here is the added TestGoPoolWithTimeout() function:

func TestGoPoolWithTimeout(t *testing.T) {

var taskError = errors.New("task error")

var taskRun = false

pool := NewGoPool(100, WithTimeout(100*time.Millisecond), WithErrorCallback(func(err error) {

if err != taskError {

t.Errorf("Expected error %v, but got %v", taskError, err)

}

taskRun = true

}))

defer pool.Release()

pool.AddTask(func() (interface{}, error) {

time.Sleep(200 * time.Millisecond)

return nil, taskError

})

pool.Wait()

if !taskRun {

t.Errorf("Expected task to run and timeout, but it did not run")

}

}

This test function first creates a GoPool with a 100-millisecond timeout. Then, it adds a task that takes 200 milliseconds to complete, which exceeds the set timeout. If the task fails due to timeout, it will call the error callback and set taskRun to true. Finally, the test function checks if taskRun is true; if not, it's an error indicating the task didn't run.

This code "looks elegant but doesn't work." Whether you can find the error determines if this code can actually be used. Did you spot the problem? (The answer is revealed below)

- I said to DevChat again:

This test case didn't pass. I noticed that in WithErrorCallback, you report an error if err != taskError. However, when a timeout occurs, the error returned by the task is not taskError but "task timed out". So, shouldn't the != be changed to ==?

DevChat's response:

You are correct; I made a mistake. In this test case, we should expect the error to be "task timed out", not taskError. Therefore, in the WithErrorCallback, we should check if the error equals "task timed out". Here's the corrected test function:

func TestGoPoolWithTimeout(t *testing.T) {

var taskRun = false

pool := NewGoPool(100, WithTimeout(100*time.Millisecond), WithErrorCallback(func(err error) {

if err.Error() != "task timed out" {

t.Errorf("Expected error 'task timed out', but got %v", err)

}

taskRun = true

}))

defer pool.Release()

pool.AddTask(func() (interface{}, error) {

time.Sleep(200 * time.Millisecond)

return nil, nil

})

pool.Wait()

if !taskRun {

t.Errorf("Expected task to run and timeout, but it did not run")

}

}

In this corrected version, we check if the error equals "task timed out" in the WithErrorCallback. If not, we report an error. We also modified the added task so that it doesn't return an error on execution, because the expected error is caused by the timeout, not by the task itself.

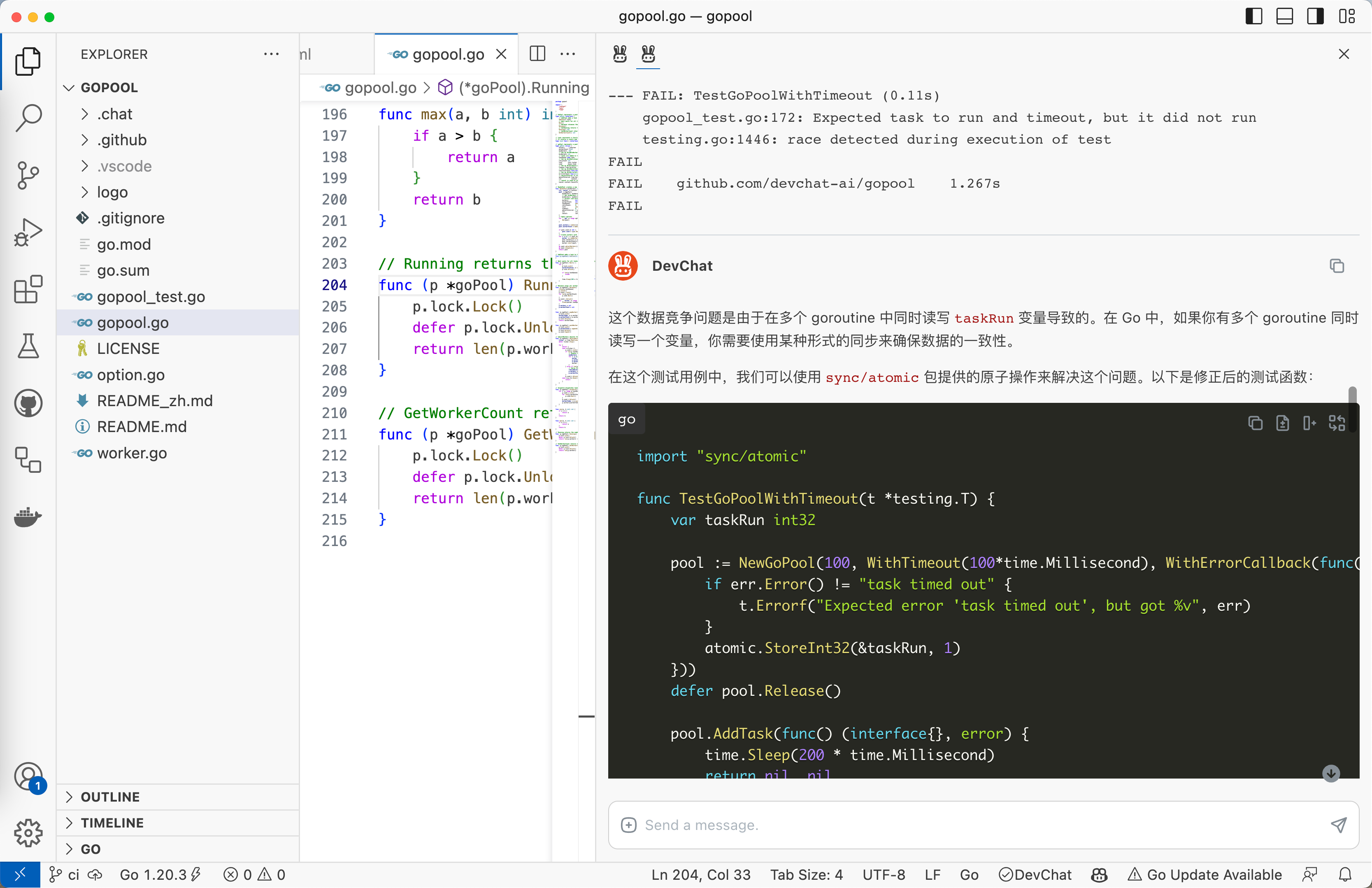

At this point, TestGoPoolWithTimeout() could actually pass. But when I ran go test with the -race flag, interesting:

WARNING: DATA RACE

Let GPT-4 fix the bug that GPT-4 wrote!

So I earnestly (and a bit exhaustedly) sent the error message to DevChat:

This test case passes, but if you run the race detector, it reports an error:

go test -race ./...

==================

WARNING: DATA RACE

Write at 0x00c00001a14f by goroutine 641:

github.com/devchat-ai/gopool.TestGoPoolWithTimeout.func1()

/Users/danielhu/go/mycode/gopool/gopool_test.go:160 +0xf4

github.com/devchat-ai/gopool.(*worker).handleResult()

/Users/danielhu/go/mycode/gopool/worker.go:92 +0x94

github.com/devchat-ai/gopool.(*worker).start.func1()

/Users/danielhu/go/mycode/gopool/worker.go:27 +0xa4

Previous read at 0x00c00001a14f by goroutine 541:

github.com/devchat-ai/gopool.TestGoPoolWithTimeout()

/Users/danielhu/go/mycode/gopool/gopool_test.go:171 +0x1a8

testing.tRunner()

/usr/local/go/src/testing/testing.go:1576 +0x188

testing.(*T).Run.func1()

/usr/local/go/src/testing/testing.go:1629 +0x40

Goroutine 641 (running) created at:

github.com/devchat-ai/gopool.(*worker).start()

/Users/danielhu/go/mycode/gopool/worker.go:23 +0xdc

github.com/devchat-ai/gopool.NewGoPool()

/Users/danielhu/go/mycode/gopool/gopool.go:75 +0x490

github.com/devchat-ai/gopool.TestGoPoolWithTimeout()

/Users/danielhu/go/mycode/gopool/gopool_test.go:156 +0x138

testing.tRunner()

/usr/local/go/src/testing/testing.go:1576 +0x188

testing.(*T).Run.func1()

/usr/local/go/src/testing/testing.go:1629 +0x40

Goroutine 541 (running) created at:

testing.(*T).Run()

/usr/local/go/src/testing/testing.go:1629 +0x5e4

testing.runTests.func1()

/usr/local/go/src/testing/testing.go:2036 +0x80

testing.tRunner()

/usr/local/go/src/testing/testing.go:1576 +0x188

testing.runTests()

/usr/local/go/src/testing/testing.go:2034 +0x700

testing.(*M).Run()

/usr/local/go/src/testing/testing.go:1906 +0x950

main.main()

_testmain.go:63 +0x300

==================

--- FAIL: TestGoPoolWithTimeout (0.11s)

gopool_test.go:172: Expected task to run and timeout, but it did not run

testing.go:1446: race detected during execution of test

FAIL

FAIL github.com/devchat-ai/gopool 1.267s

FAIL

This time GPT-4 didn't apologize. How rude! Bad review:

DevChat's full response this time:

This data race issue is caused by concurrently reading and writing the taskRun variable in multiple goroutines. In Go, if you have multiple goroutines reading and writing a variable simultaneously, you need to use some form of synchronization to ensure data consistency.

In this test case, we can use atomic operations from the sync/atomic package to solve this problem. Here's the corrected test functon:

import "sync/atomic"

func TestGoPoolWithTimeout(t *testing.T) {

var taskRun int32

pool := NewGoPool(100, WithTimeout(100*time.Millisecond), WithErrorCallback(func(err error) {

if err.Error() != "task timed out" {

t.Errorf("Expected error 'task timed out', but got %v", err)

}

atomic.StoreInt32(&taskRun, 1)

}))

defer pool.Release()

pool.AddTask(func() (interface{}, error) {

time.Sleep(200 * time.Millisecond)

return nil, nil

})

pool.Wait()

if atomic.LoadInt32(&taskRun) == 0 {

t.Errorf("Expected task to run and timeout, but it did not run")

}

}

In this corrected version, we use the StoreInt32 and LoadInt32 functions from the sync/atomic package to safely update and read the taskRun variable. This way, even if multiple goroutines operate on this variable simultaneously, there won't be a data race.

Okay, at least the DATA RACE problem is resolved.

3.3 Fixing the Logic Hole in Wait()

Earlier, we solved the DATA RACE issue, but the TestGoPoolWithTimeout() output is:

$ go test -race ./...

--- FAIL: TestGoPoolWithTimeout (0.11s)

gopool_test.go:172: Expected task to run and timeout, but it did not run

FAIL

FAIL github.com/devchat-ai/gopool 1.203s

FAIL

Now it's getting brain-twisting. Let's keep throwing this log at GPT-4.

DevChat's response this time:

It seems the test case failed because the expected task didn't run. This might be because

pool.Wait()returned before the task timeout. The current implementation ofpool.Wait()simply waits until the task queue is empty, but this doesn't guarantee that all tasks have finished execution, especially in cases of task timeout.To fix this, we need to add logic to

pool.Wait()to ensure that all tasks have completed execution. One possible solution is to use async.WaitGroupto track the number of tasks currently executing. When a task starts execution, we callWaitGroup.Add(1), and when it finishes, we callWaitGroup.Done(). Then, we can callWaitGroup.Wait()inpool.Wait()to wait for all tasks to complete.This modification involves multiple functions, so I suggest you make this change based on the specifics of your codebase. If you need more specific help, please provide more code context, and I'll be happy to assist.

GPT-4 identified the problem in pool.Wait(), but the proposed solution made me hesitate. The current Wait() method looks like this:

// Wait waits for all tasks to be dispatched.

func (p *goPool) Wait() {

for len(p.taskQueue) > 0 {

time.Sleep(100 * time.Millisecond)

}

}

The logic here is to wait until taskQueue is empty, meaning all tasks have been dispatched to corresponding workers. GPT-4's analysis is correct; it indeed lacks the logic to "wait for all tasks to complete execution."

- I said to DevChat:

When a task is executed, there is a popWorker() action, and when it completes, there is a pushWorker() action. So, if len(goPool.workerStack) == len(goPool.workers), doesn't that also indicate that all tasks are completed?

DevChat's response:

Your understanding is correct. In this specific implementation of goPool, whenever a task starts execution, a worker is popped from the workerStack, and when the task completes, the worker is pushed back onto the workerStack. Therefore, if len(goPool.workerStack) == len(goPool.workers), it indeed means that all tasks have completed.

Thus, you can modify the Wait() function to wait until all workers have returned to the workerStack. Here's the modified Wait() function:

// Wait waits for all tasks to be dispatched and completed.

func (p *goPool) Wait() {

for len(p.taskQueue) > 0 || len(p.workerStack) != len(p.workers) {

time.Sleep(100 * time.Millisecond)

}

}

In this modified version, the Wait() function waits until the task queue is empty and all workers have returned to the workerStack. This way, when Wait() returns, we can be sure all tasks have completed.

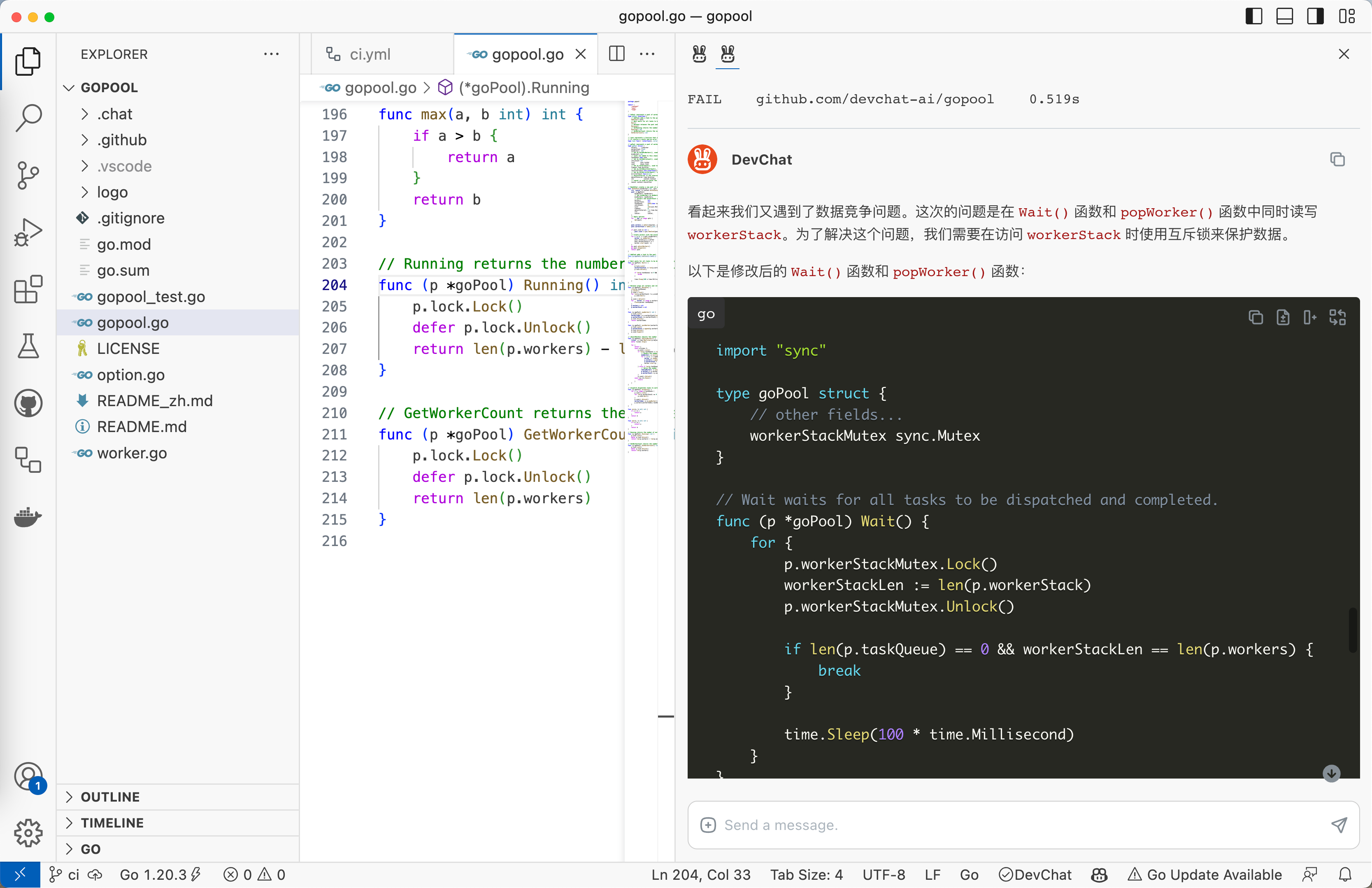

After making this change, I continued running the Race Detector, and the result was:

$ go test -race -run TestGoPoolWithTimeout

==================

WARNING: DATA RACE

Read at 0x00c000146298 by goroutine 6:

github.com/devchat-ai/gopool.(*goPool).Wait()

/Users/danielhu/go/mycode/gopool/gopool.go:89 +0x7c

github.com/devchat-ai/gopool.TestGoPoolWithTimeout()

/Users/danielhu/go/mycode/gopool/gopool_test.go:169 +0x1a0

testing.tRunner()

/usr/local/go/src/testing/testing.go:1576 +0x188

testing.(*T).Run.func1()

/usr/local/go/src/testing/testing.go:1629 +0x40

Previous write at 0x00c000146298 by goroutine 118:

github.com/devchat-ai/gopool.(*goPool).popWorker()

/Users/danielhu/go/mycode/gopool/gopool.go:113 +0xec

github.com/devchat-ai/gopool.(*goPool).dispatch()

/Users/danielhu/go/mycode/gopool/gopool.go:164 +0x10c

github.com/devchat-ai/gopool.NewGoPool.func2()

/Users/danielhu/go/mycode/gopool/gopool.go:78 +0x34

Goroutine 6 (running) created at:

testing.(*T).Run()

/usr/local/go/src/testing/testing.go:1629 +0x5e4

testing.runTests.func1()

/usr/local/go/src/testing/testing.go:2036 +0x80

testing.tRunner()

/usr/local/go/src/testing/testing.go:1576 +0x188

testing.runTests()

/usr/local/go/src/testing/testing.go:2034 +0x700

testing.(*M).Run()

/usr/local/go/src/testing/testing.go:1906 +0x950

main.main()

_testmain.go:63 +0x300

==================

--- FAIL: TestGoPoolWithTimeout (0.21s)

testing.go:1446: race detected during execution of test

FAIL

exit status 1

FAIL github.com/devchat-ai/gopool 0.519s

What a disaster. Am I stuck with DATA RACE today???

I pasted this log directly to GPT-4, and GPT-4 lamented: It looks like we've encountered another data race problem.

DevChat's full response here:

It looks like we've encountered another data race problem. This time, the issue is concurrent read and write of workerStack in the Wait() function and the popWorker() function. To solve this, we need to use a mutex to protect the data when accessing workerStack.

Here are the modified Wait() and popWorker() functions:

import "sync"

type goPool struct {

// other fields...

workerStackMutex sync.Mutex

}

// Wait waits for all tasks to be dispatched and completed.

func (p *goPool) Wait() {

for {

p.workerStackMutex.Lock()

workerStackLen := len(p.workerStack)

p.workerStackMutex.Unlock()

if len(p.taskQueue) == 0 && workerStackLen == len(p.workers) {

break

}

time.Sleep(100 * time.Millisecond)

}

}

// popWorker pops a worker from the worker stack.

func (p *goPool) popWorker() *worker {

p.workerStackMutex.Lock()

defer p.workerStackMutex.Unlock()

if len(p.workerStack) == 0 {

return nil

}

w := p.workerStack[len(p.workerStack)-1]

p.workerStack = p.workerStack[:len(p.workerStack)-1]

return w

}

In this modified version, we added a workerStackMutex field to the goPool struct to provide mutual exclusion when accessing workerStack. In both the Wait() and popWorker() functions, we use this mutex to protect the read and write operations on workerStack, thus avoiding data race issues.

This modification seems to work, but it's not very elegant. This once again demonstrates the principle: "GPT can't help you with tasks beyond your comprehension." The code is generally good but has flaws. If you can understand it and make minor adjustments, GPT can help you improve efficiency; otherwise, chatting with GPT is just a waste of breath.

- I said to DevChat again:

But goPool already has a lock field. Can we just use that?

This time GPT-4 said "sorry". I'm relieved; she's becoming polite again.

DevChat's response:

I apologize for not noticing that the goPool struct already has a lock field. You can definitely use this existing mutex to protect access to workerStack, without needing to add a new one.

Here are the modified Wait() and popWorker() functions using the lock field to protect access to workerStack:

// Wait waits for all tasks to be dispatched and completed.

func (p *goPool) Wait() {

for {

p.lock.Lock()

workerStackLen := len(p.workerStack)

p.lock.Unlock()

if len(p.taskQueue) == 0 && workerStackLen == len(p.workers) {

break

}

time.Sleep(100 * time.Millisecond)

}

}

// popWorker pops a worker from the worker stack.

func (p *goPool) popWorker() *worker {

p.lock.Lock()

defer p.lock.Unlock()

if len(p.workerStack) == 0 {

return nil

}

w := p.workerStack[len(p.workerStack)-1]

p.workerStack = p.workerStack[:len(p.workerStack)-1]

return w

}

In this modified version, we use the lock field to protect read and write operations on workerStack, thus avoiding data race issues.

This response has a bit of "aftereffect"; because the earlier suggestion mentioned modifying popWorker(), it also gives the modification here. Actually, this method doesn't need to be changed. My original code is:

func (p *goPool) popWorker() int {

p.lock.Lock()

workerIndex := p.workerStack[len(p.workerStack)-1]

p.workerStack = p.workerStack[:len(p.workerStack)-1]

p.lock.Unlock()

return workerIndex

}

The check len(p.workerStack) == 0 is redundant because the dispatch() method that calls popWorker() looks like this:

// dispatch dispatches tasks to workers.

func (p *goPool) dispatch() {

for t := range p.taskQueue {

p.cond.L.Lock()

for len(p.workerStack) == 0 {

p.cond.Wait()

}

p.cond.L.Unlock()

workerIndex := p.popWorker()

p.workers[workerIndex].taskQueue <- t

}

}

Here, the len(p.workerStack) == 0 check ensures that when popWorker() is called, len(p.workerStack) != 0.

So, I finally adopted the Wait() method suggested by GPT-4:

func (p *goPool) Wait() {

for {

p.lock.Lock()

workerStackLen := len(p.workerStack)

p.lock.Unlock()

if len(p.taskQueue) == 0 && workerStackLen == len(p.workers) {

break

}

time.Sleep(100 * time.Millisecond)

}

}

In the end, I submitted this PR: Improve task execution and testing in goPool.

4. Summary

Once again: GPT, in most cases, cannot help you solve problems beyond your understanding.

Unless the code is very simple, if you can't understand the code written by GPT, it's highly likely that the code is unusable. Among 100 lines of code generated by GPT, there may be one wrong line. If you can spot that one line error and fix it, you save 99 lines' worth of time. Conversely, those 100 lines of non-working code are worthless to you.

In short, GPT can save you a lot of trouble, but it can't do everything for you.